Building With Jenkins Inside an Ephemeral Docker Container

In this tutorial, you’ll learn:

-

Various approaches to running builds with Jenkins inside of Containers

-

Which decisions we made as a team and why

-

Lessons we learned while operating our platform

-

Context for the in depth tutorial about how to create your own ephemeral build environments using Jenkins

-

How to productionize this setup if you want to use it as your main Jenkins server instead of a local development server

Thinking inside the container means building inside one as well. Today I’d like to open up the box on how my team is currently combining Jenkins and Docker to serve Riot Engineering teams. In a related post, I promised I would discuss the actual build slave and Jenkins configuration directly. In some ways this is the main event—if you don’t have a Jenkins server ready to receive slaves, it’s a good idea to go back through and follow along with the previous posts.

Before I begin the tutorial proper, however, let’s talk about the approach and alternatives. There are many ways to use Docker containers as build slaves. Even narrowing the field to just using them on Jenkins still presents plenty of options. From my research and discovery, I feel that there are two major approaches you can take. Conceptually, I’ll refer to these as the “Docker execution” and “Docker ephemeral slave” models.

These two approaches have roots in how Jenkins connects and communicates to your build slave. In the execution model, Jenkins connects in a traditional fashion: by running an agent on an existing VM or physical hardware, where it expects a running Docker host. In the ephemeral model, Jenkins connects to a Docker container directly and treats that as a build slave. The distinction between the two options is important, so let’s take a moment to break each one down.

DOCKER EXECUTION MODEL

In the execution model, we architecturally assume the slave is a Docker host but we treat it as a physical machine. When a Jenkins job starts, it syncs/creates a working directory on the slave directly, leverages docker run and docker exec commands to spin up a container, and then mounts its local workspace inside. The container is a virtual scratchpad and isolated environment. It can have all the custom versions of tools and binaries that we need to compile the source code we’ve mounted into the container.

When the build job is complete, all the binaries and build artifacts it produced will be on the slave in the traditional Jenkins workspace. Jenkins can shut down the container safely and do any post-build cleanup as normal.

This model is best represented by the Cloudbees Custom Build Environment Plugin, which is open-source and maintained by the owners of the Jenkins source repository.

DOCKER EPHEMERAL SLAVE MODEL

The ephemeral model aims to leverage the autonomous and isolated nature of Docker containers to scale a Jenkins build farm to meet any demand placed upon it. Instead of the traditional array of pre-allocated slaves or at-the-ready virtual machines, this model treats the entire container itself as a slave.

We spin up a container whenever there is a demand for a Jenkins executor, automatically configure Jenkins to accept this container as a new slave, execute the job within it, and finally shut down the container and de-allocate the slave. It’s certainly more complicated than the execution model.

This approach has several competing plugins, generally centered around how to maintain and build the Docker cloud you want to use. There’s plugins for Kubernetes, Mesos, and “pure” Docker approaches so far.

WHICH MODEL TO CHOOSE?

Both models represent valid approaches to solving the problem. At Riot, we were interested in getting away from allocating build boxes and “executors” to specific purposes, so the ephemeral model was attractive to us. We liked the idea of having a container represent the entire slave. So we went with the Docker Plugin to achieve our goals.

Over time, we’ve been happy with that choice. You might find when searching for a Docker Plugin for Jenkins that you find a Docker Plugin and a Yet Another Docker Plugin. As with many open source development efforts there’s some history here. Much of our original work with Docker and Jenkins was on early versions of the Docker Plugin spearheaded by developer Kanstantsin Shautsou. He eventually forked that work into Yet Another Docker Plugin and continued development. Recently, Cloudbees developers have picked up the original Docker Plugin and advanced it significantly. This tutorial will focus on using that plugin due to the Cloudbees support it’s currently enjoying. That said, having tested both, the Yet Another Docker Plugin also works well. Which one you choose is ultimately up to you but for the sake of simplicity I’ll cover just one. I would like to add that these tutorials would not have been possible without Kanstantsin’s work on the original Docker Plugin and the Yet Another Docker Plugin. You can find his work here on Github.

TUTORIAL

This is the longest tutorial I’ve written, so I’ve decided to link it here to save space (and to separate the conversation from the implementation). The tutorial takes about 30-45 minutes to complete, assuming you’ve followed the previous posts. You can check out the full tutorial here:

Alternatively if you want to get up and running as quickly as possible and skip the construction, you can pull down the complete tutorial and follow the README instructions here:

LESSONS LEARNED

Operating this platform comes with its own set of distinct challenges. Here’s a short list of things to keep in mind that hopefully will save you time and effort:

OPERATING DOCKERHOSTS AT SCALE IS NOT “SIMPLE”

Disk space is a big deal. Docker images eat space. Every running container eats space. Sometimes containers die and eat space. Teams will create build slaves that use volumes; these eat even more disk space.

Monitoring disk space on your Docker hosts is essential. Do not let it run out. Be proactive.

CLEANING UP IMAGES AND CONTAINERS IS LIKE GARBAGE COLLECTION

Every time a new image is pushed to a Docker host, it leaves “dangling” unused layers behind. Cleaning these up has to become routine or disk space will be a problem.

Container slaves can sometimes halt or not clean up - paying attention to “exited” and “dead” containers is a necessary part of keeping a Docker host clean.

ADDING NEW IMAGES/SLAVES TO A FLEET OF DOCKERHOSTS IS TIME CONSUMING

Initially, engineers had to request that their images be added to the Jenkins configuration. We soon noticed this process was an unnecessary impediment. In our experience, the average engineering team was changing their Dockerfile several times a week when in early development.

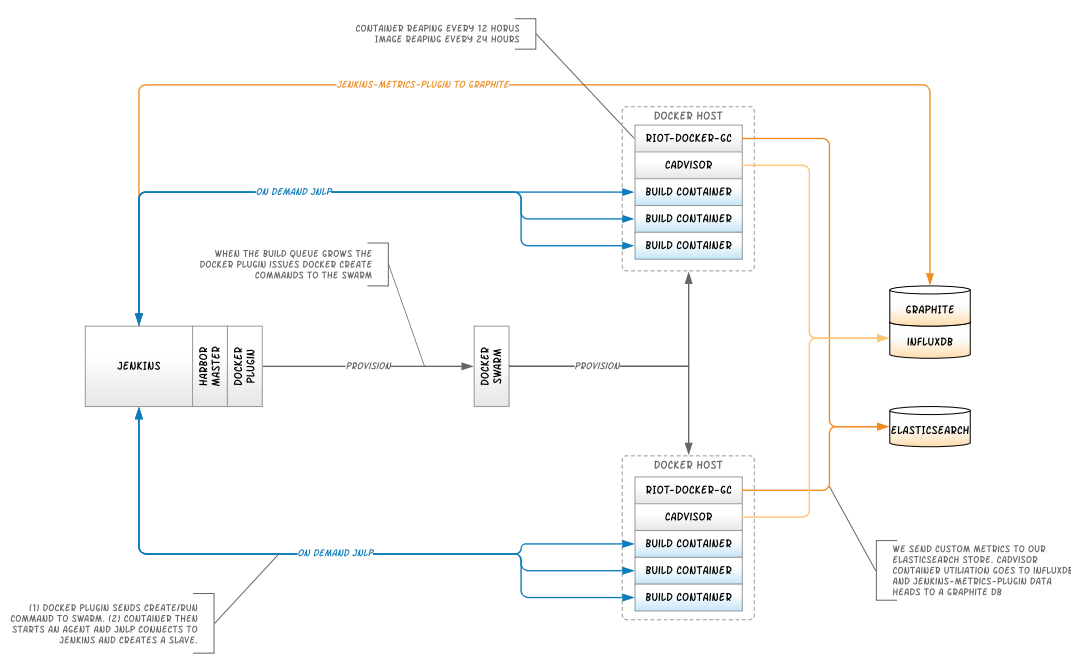

We created a tool we call “Harbormaster” that automatically verifies images engineers supply and auto-configures Jenkins via a Groovy API. Harbormaster tests each image against a set of core criteria and verifies the slave will work. It generates test reports and configures Jenkins automatically.

MONITORING THE BEAST IS ESSENTIAL

We’re still evolving how we monitor Jenkins and our Docker Swarm. The art of monitoring a Docker Cloud is in a constant state of evolution and takes a unique twist when that cloud is a Build Farm and not an Application Farm.

As with Harbormaster, discussing monitoring here would've made this article far longer. I'll be talking more about monitoring all of this in production in a future blog.

JENKINS AUDITING CAN BITE YOU

Once, we were woken in the middle of the night by a Jenkins server crash due to disk space running out. Turns out, it had 100,000 tiny log files of “Create/Destroy” build slave entries. Jenkins keeps an audit log of every build slave it creates and destroys; pay attention or you’ll end up suffering death by a thousand cuts.

There are more lessons I’m sure. I’ll be talking about how to handle some of these in future posts and/or presentations so please don’t hesitate to ask questions!

PRODUCTIONIZING YOUR SYSTEM AND OUR RESULTS

With the tutorial completed, you should have a fully functional Dockerized Jenkins sandbox. There is a mountain of potential in what you just created. There are a few things you need to do to “productionize” this.

- Configure your production master Jenkins server with the Docker plugin as you did in the tutorial above (install the plugin).

- Stand up a “production” Dockerhost. That’s a bit outside this tutorial. Our Continuous Delivery team uses Centos VM’s running on VSphere, but you can use AWS instances, physical machines, or just about any valid Dockerhost you want. That’s the power of Docker.

- If you’re using secure TLS cert protected endpoints in your production ecosystem you’ll have to create certificate keys in Jenkins for it. This is not something you have to do on a local installation.

- Make sure your build slave image is somewhere your production Dockerhost can reach. At Riot we use a central Docker image repository but you could use Dockerhub if you wanted so long as your server has access to the public internet.

Many of these things can be involved to set up, so please don’t hesitate to ask questions and provide pointers!

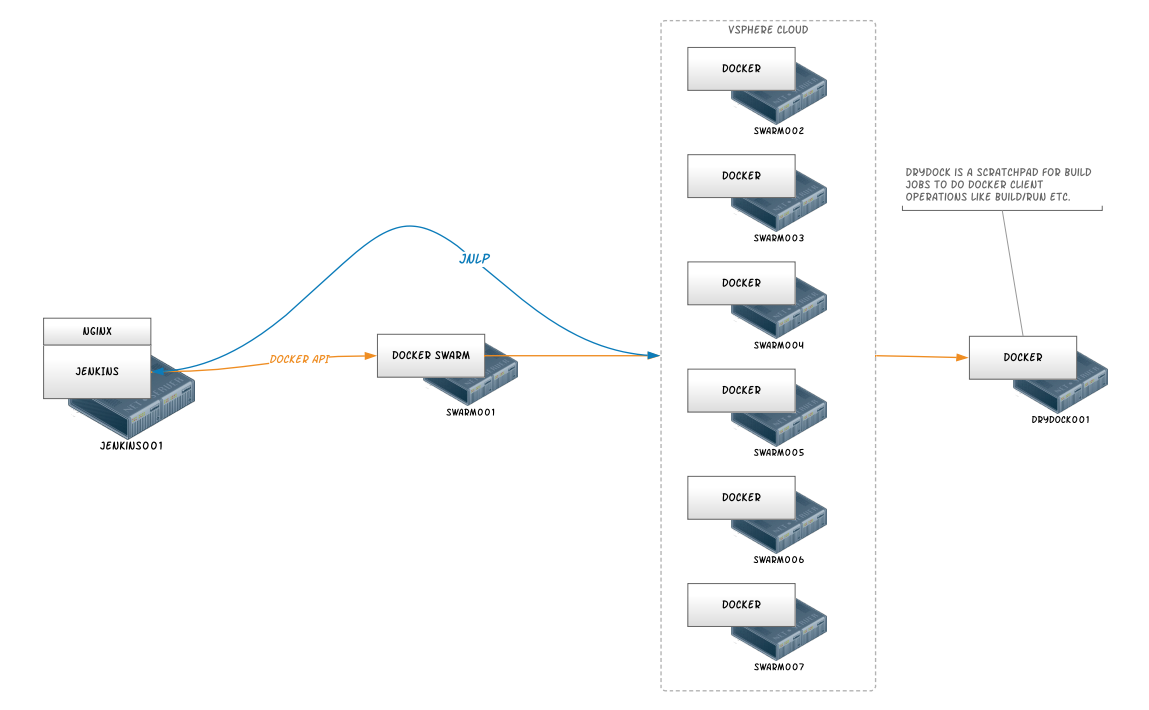

For us, this is not a “sandbox” or “playtime” setup. Our live environment changes only one component from what’s listed in this tutorial. Our production Dockerhost is actually a Docker Swarm app, backed by 6 Dockerhost machines. Here are some diagrams of how we have things set up right now (at the time of publication):

Abstract Architecture

Physical Architecture

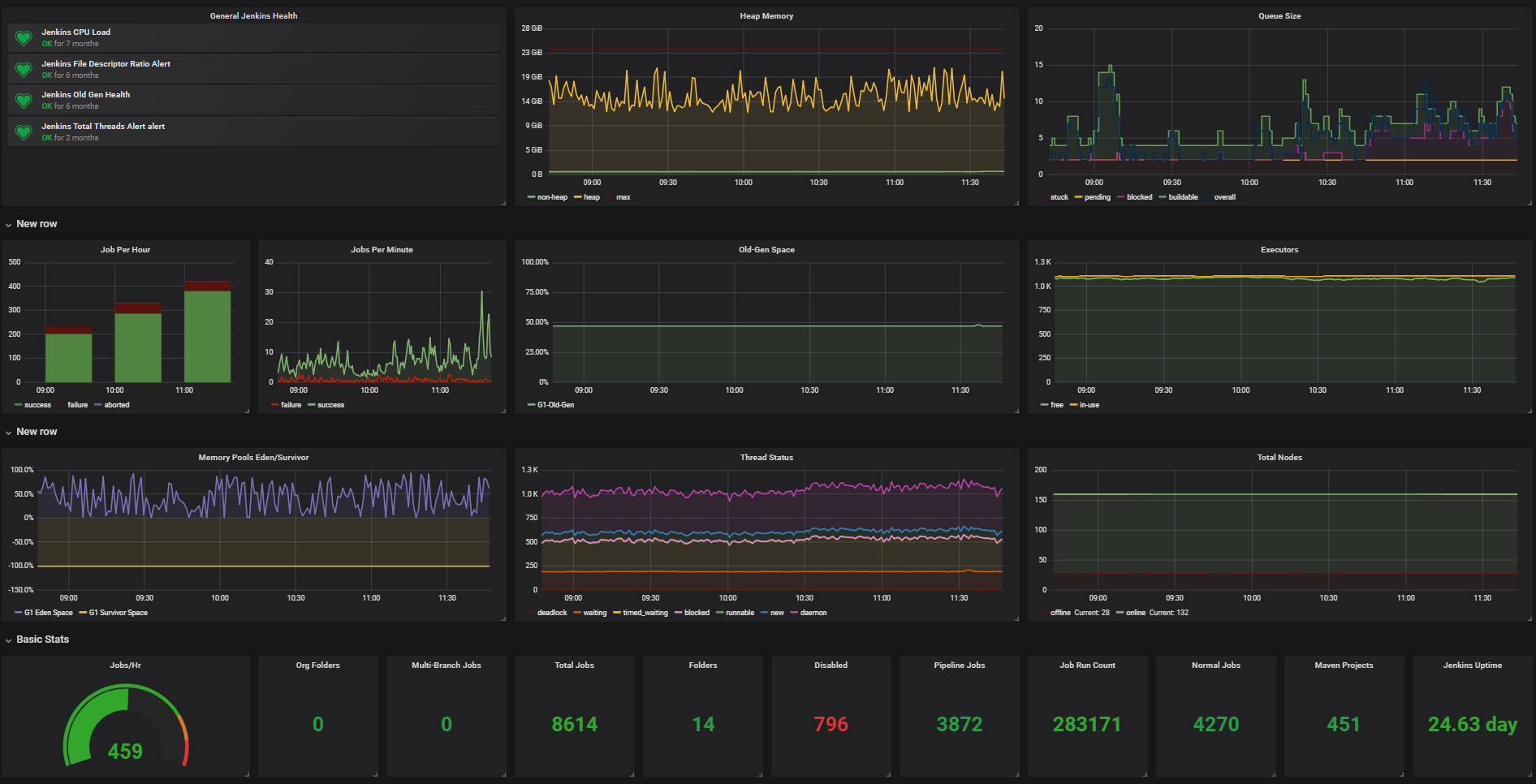

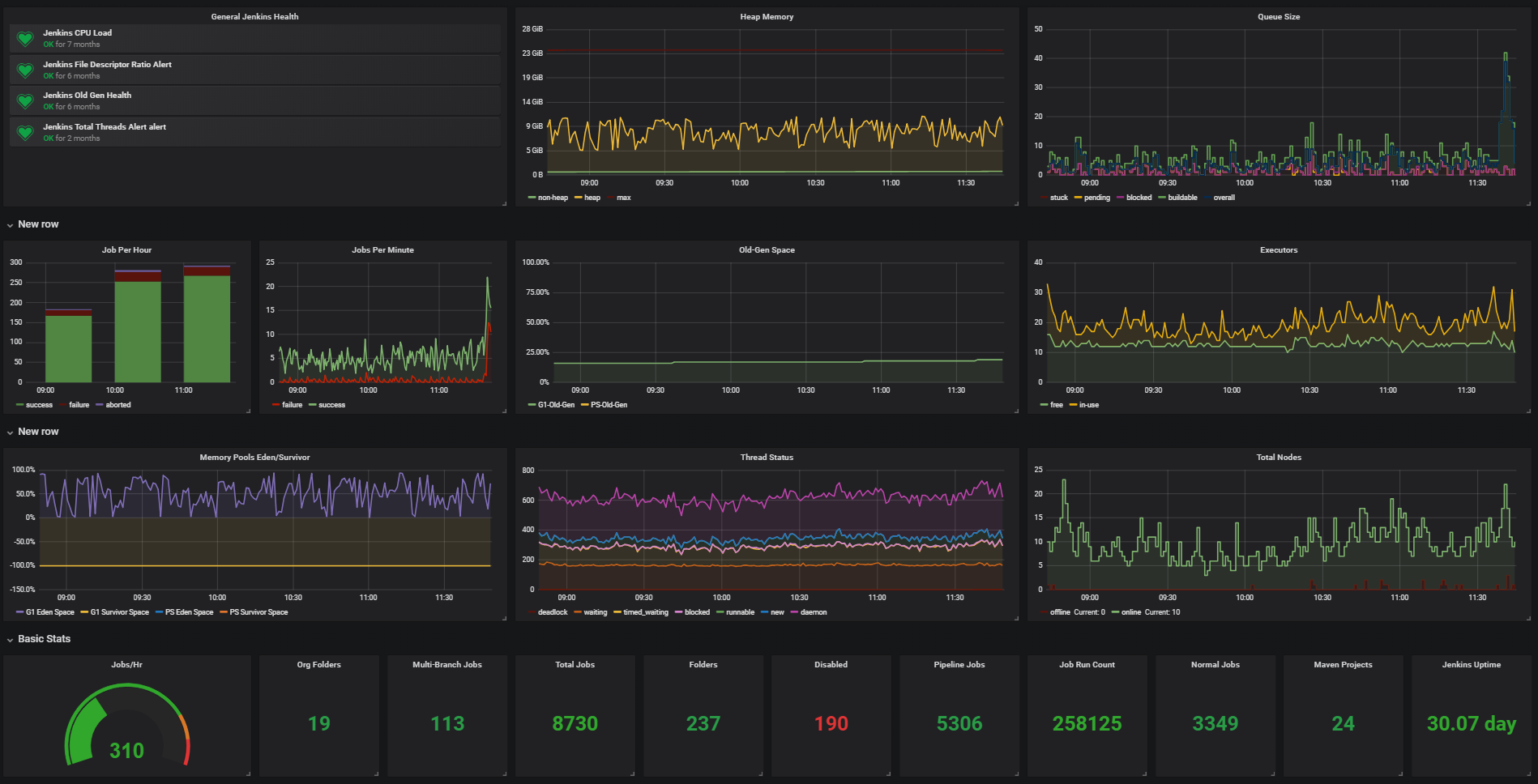

Here are some stats from our Production System:

Jenkins Stats

Jenkins Stat Details

- Average Queue Size: 30-40

- Provisioned Executors: ~1100

- Average Executors in Use: 100-150

- Total Build Nodes: ~100

- Avg Jobs Per Hour: ~700

Docker Jenkins Stats

Docker Jenkins Stat Details

- Average Queue Size: 10

- Average Executors in Use: 20

- Avg Jobs Per Hour: 400

We deployed this system on a much earlier version of Docker (Docker 1.2) and Docker Plugin (.8) early in 2014. Stability at that time was definitely a concern. I feel confident in saying that the current setup (Docker 1.10 + Docker Plugin 0.15) is very stable for our needs. In the year or so we’ve been using this we grew from a handful of build jobs and some early adopting teams to a huge range of both. Thanks to the recent development on the Docker Plugin by Cloudbees, it’s now on version 1.1.4 and we’re in late stage testing of it now and intend to move to it as it no longer requries SSH connections and provisions significantly faster than it used to.

In fact, our Dockerized Jenkins platform now represents nearly 60% of all the build jobs we support on our entire Jenkins platform (7000 out of almost 12000). The only new jobs use on our Classic Jenkins setup are those that require Windows or OSX build environments (Typically for mobile or game engine development). This is because of the following things:

- Defining build environments as Dockerfiles gives Engineering teams full control. In fact, teams rarely have to make their own environments because templated build environments defined as Docker Images cover most of their needs.

- These build environments can be tested locally with ease using Docker and replicate build farm behavior wherever they are deployed.

- Teams don’t have to be “systems administrators” of build VMs or boxes; it’s “hands off” ownership.

CONCLUSION

When I started this journey of blog writing in August of 2015, we already had a functional prototype. 30 months later we’ve received a tremendous amount of feedback and encouragement for putting this blog together and the results of the work define the future of our Continuous Delivery as a Service ecosystem.

By this point you should have a solid introduction to Docker basics, and know a bit about scaling Docker and securing it - all demonstrated through real-world use and application of Jenkins. On the flip side, you also should have a functional configuration of a full Jenkins test environment running against your local Docker for Mac or Windows Dockerhost, including the ability to create custom build slaves. It’s literally “Jenkins in a box.” I’ll dig deeper into this ecosystem in future posts, and describe the tools, APIs, and monitoring we’ve built.

I truly hope you’ve found this useful. The feedback we’ve received has been fantastic, and I love the enthusiasm of the community. As always you can comment below and I highly appreciate it!

All of the files are available on my public Github; everything here is built with nothing but Open Source magic! Do not hesitate to create issues there, provide pull requests, etc.

For more information, check out the rest of this series:

Part I: Thinking Inside the Container

Part II: Putting Jenkins in a Docker Container

Part III: Docker & Jenkins: Data That Persists

Part IV: Jenkins, Docker, Proxies, and Compose

Part V: Taking Control of Your Docker Image

Part VI: Building with Jenkins Inside an Ephemeral Docker Container (this article)

Part VII: Tutorial: Building with Jenkins Inside an Ephemeral Docker Container

Part VIII: DockerCon Talk and the Story So Far