Hi, I’m Brandon “mochi” Wang, a software engineer on VALORANT’s Content Support team. I’m specifically going to focus on shaders, which are an essential part of computer graphics, my area of expertise. Shaders are the programs behind what most people consider a game’s graphics - how a program running on your GPU takes in scene/game data and creates the pixels seen on screen. I’m excited to talk about this because the intersection of engineering, art, and design is a personal passion of mine.

Hello! My name is Tomasz Mozolewski, and I’m a senior software engineer on our Competitive team. I’m here to talk about an event that has sparked a lot of discussion about League tech, and which happens to be one of the most requested Tech Blog topics of all time - Clash.

From the very beginning of VALORANT development, we made it a priority to build out cheating resistance to ensure competitive integrity. In this article, I’ll walk you through one of these anti-cheat systems - Fog of War. This is one of VALORANT’s key security systems, which focuses on combating cheats that take advantage of a game client’s access to information, like wallhacks.

Hi, my name is Joshua Parker, and I’m an engineer on our Champions team. I help create the systems that unlock new capabilities for champions in League. Although my work is typically focused on how we build out a new champion, it also means revamping older systems and smashing tech debt along the way to help our engine evolve and allow us to keep creating new exciting experiences for players. In this article, I’ll describe the tech that went into reworking the League champion Mordekaiser.

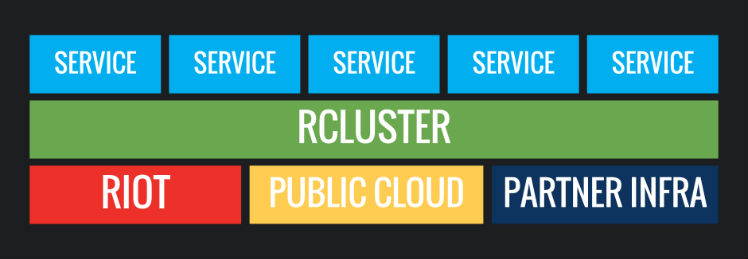

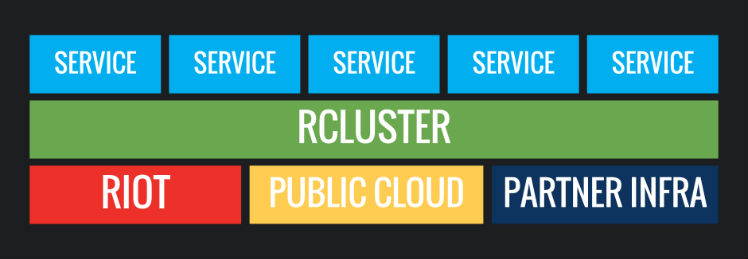

Welcome back to the Running Online Services series! This long-running series explores and documents how Riot Games develops, deploys, and operates its backend infrastructure. Since 24 months is an eternity in this space, we figured we would update you all on how things have worked out, new challenges we faced, and what we learned addressing them!

Our technical interns worked with fellow Rioter technologists on everything from game engineering to developer tooling. Before they left, we asked some of them to share projects they contributed to, and to tell their stories of interning at Riot.

Hi, I'm Doug Lardo, a solutions architect at Riot Games. In this article, I'm going to introduce the concept of Fault Injection Testing, and talk about the Riot Games API and how they implemented it. Then I’ll discuss our testing methods, what we found, and soap box a little bit about high availability design along the way.

A whole new game means a whole heap o’ new technology. While we won’t be able to cover all of it in one go, I’d love to give you a glimpse at how we add features to LoR. I’ll describe our process of making it work so we can playtest it, move on to making it presentable using our suite of artist tools, tweaking it for balance, and finally making it shine by adding motion and sound before sending it out to players.

We’re the Esports Technology Group, and we’re responsible for the tech behind Riot’s biggest esports events, from reliable network connectivity to global broadcast capabilities to specialty tournament servers to the custom PC fleet used by pros. Part of our role at Riot is to approach typical broadcast and live production challenges with scalable and technology-driven solutions.

Hiya folks, Brian "Penrif" Bossé, your local friendly Tech Lead of League here. I'm taking some time in between matches of TFT to wax philosophic about game engines and how we on League make decisions around what direction to take our custom game engine. Join me on a moderately long look at one dimension of game engine design, where League currently exists on that dimension, and where we're taking the game from there.