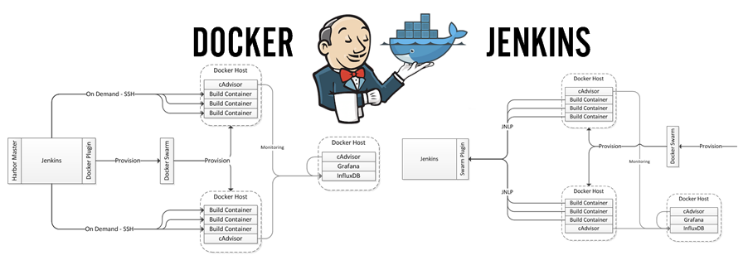

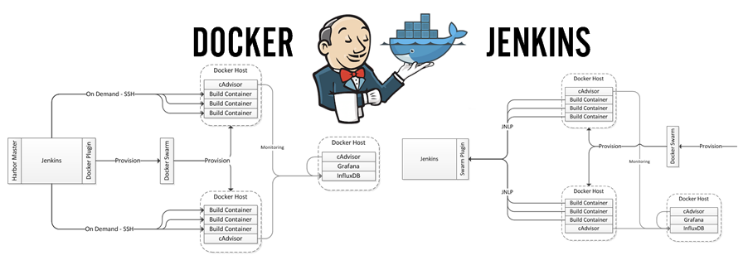

This is the in-depth tutorial for the discussion started here about creating a build farm using Jenkins with Docker containers as the build slaves. When we’re done, you should have a fully functional Jenkins environment that provisions build slaves dynamically running on your local environment and ready for you to productionize.

In this first tutorial in the Docker series, you’ll learn:

-

What we’re trying to accomplish at Riot

-

Basic setup for Docker

-

Basic Docker Pull Commands

-

How to run Docker Containers as Daemons

-

Basic Jenkins configuration options

It’s been over two years since I first wrote an article discussing how we combined Docker containers and Jenkins to create ephemeral build environments for a lot of our backend software at Riot Games. Today the series is seven articles strong and you’ve rewarded us with feedback, conversation, technical insights, tips, and stories about how you too use containers to do all kinds of interesting things. In the world of technology, two years is a long time. The series, while still useful, is out of date. Many of the latest Docker doodads and gizmos are absent.

In this tutorial, you’ll learn:

In this tutorial, you’ll learn:

In this tutorial, you’ll learn:

In this tutorial, you’ll learn:

-

Various approaches to running builds with Jenkins inside of Containers

-

Which decisions we made as a team and why

-

Lessons we learned while operating our platform

-

Context for the in depth tutorial about how to create your own ephemeral build environments using Jenkins

Over the past several months I’ve published six articles that discuss using Docker and Jenkins to containerize a build farm. Recently, I went on the road to tell the story at DockerCon 2016 and gathered a tremendous amount of amazing feedback. In fact, the best part of this whole experience has been the conversations we’re having with folks encountering similar challenges. In this short post, I’d like to accomplish two things: share the video of my DockerCon talk, and respond to requests we’ve received to consolidate my articles into a single place.

Containers have taken over the world, and I, for one, welcome our new containerized overlords.

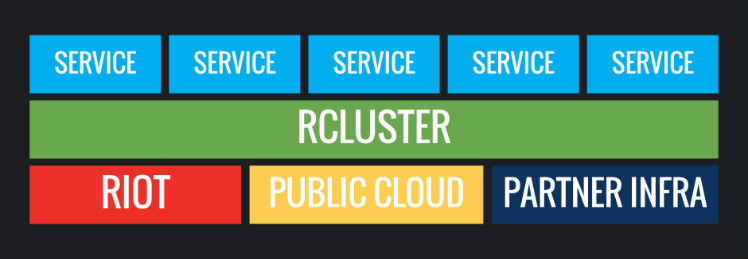

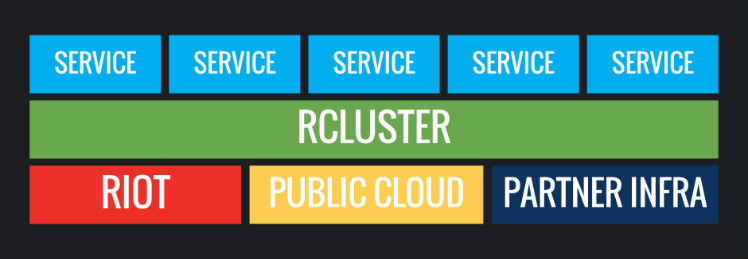

Our names are Kyle Allan and Carl Quinn, and we work on the infrastructure team here at Riot. Welcome to the second blog post in our multi-part series describing in detail how we deploy and operate backend features around the globe. In this post, we are going to dive into the first core component of the deployment ecosystem: container scheduling.